News

RunAnywhere in the press

March 9, 2026

Regulated Industries Are Rewriting Their AI Architecture

Regulated industries across healthcare, financial services, and telecommunications are fundamentally rethinking how they deploy AI. Rather than relying on cloud-based solutions that promise scalability, these organizations are prioritizing control and compliance — moving inference operations from centralized cloud environments to edge devices where data never leaves the premises. The shift is driven by stricter data residency requirements, growing privacy regulations, and the need for direct oversight of sensitive information. Organizations can no longer afford the risk exposure that comes with routing patient records, financial transactions, or customer data through third-party cloud providers. Edge deployment reduces vulnerability to cloud-based breaches while enabling real-time decision-making. This represents a significant departure from the cloud-first AI paradigm that dominated enterprise technology strategies in recent years. Companies like RunAnywhere are providing the infrastructure layer that makes this architectural shift possible, enabling enterprises to run AI models directly on devices while maintaining the security, privacy, and compliance standards that regulated industries demand.

March 9, 2026

RunAnywhere Is Building the Infrastructure Layer for On-Device AI at Scale

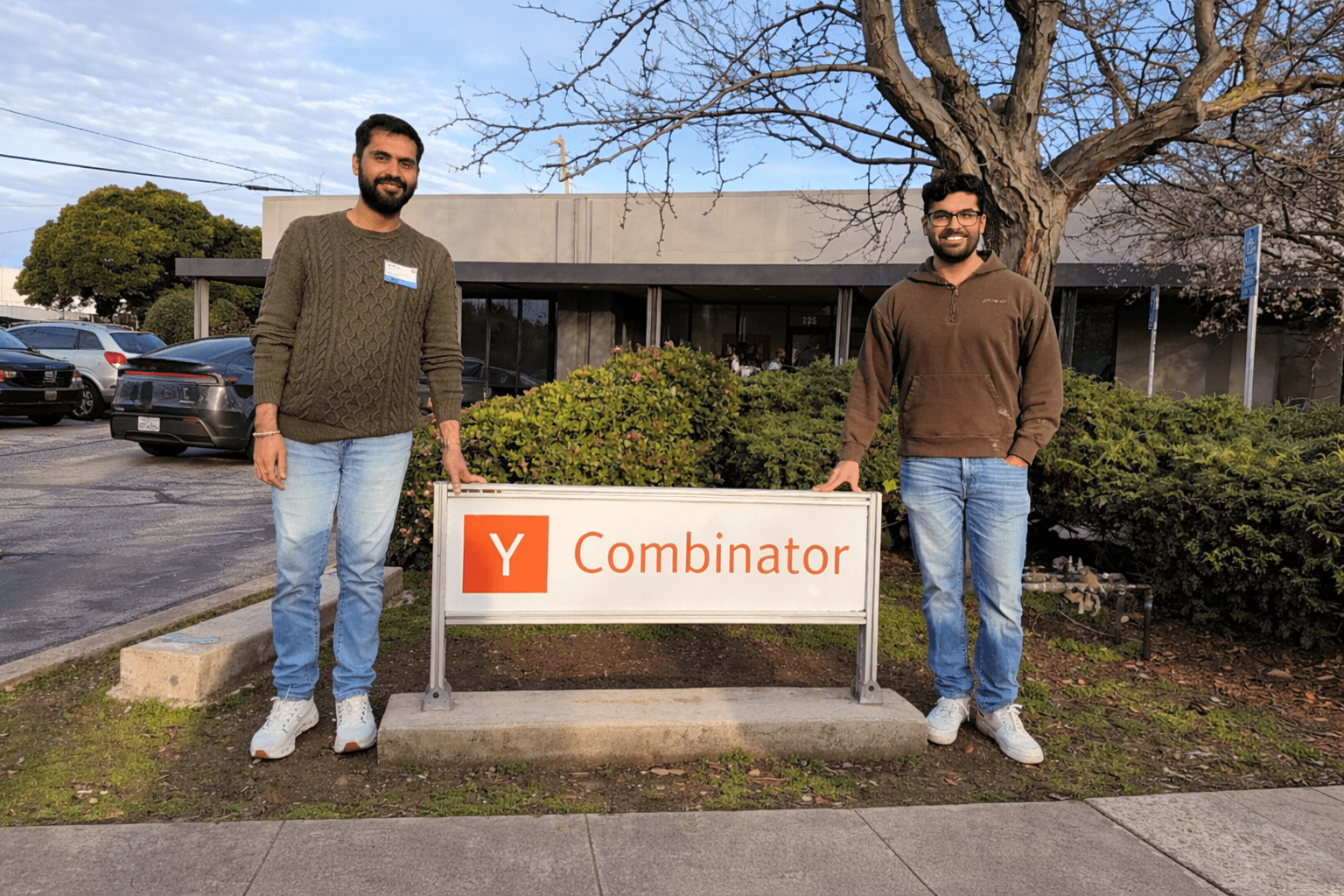

RunAnywhere, a Y Combinator-backed startup founded by Sanchit Monga and Shubham Malhotra, is building the infrastructure to deploy AI directly on consumer and enterprise devices rather than relying on cloud servers. The founders identify a critical gap in current AI deployment: cloud-first models excel in demonstrations but struggle with real-world constraints including latency, privacy vulnerabilities, connectivity issues, and escalating cloud expenses. Their philosophy is clear — "Latency is a feature. Privacy is a feature. Reliability is a feature." Beyond basic inference, the platform tackles the difficult operational problems that most companies overlook: distributing models across millions of devices with varying hardware, managing memory constraints, ensuring runtime compatibility, and orchestrating over-the-air updates. Sanchit's background in mobile development at Intuit provided firsthand insights into why deployment fails at scale. The company offers a unified SDK enabling single integration across iOS, Android, and edge devices, with a control plane that manages model versions, rollout policies, and performance tracking across thousands of devices simultaneously. Voice AI is a primary focus area, with always-on wake word detection, low-latency speech-to-text, and offline LLM execution representing key practical achievements. Voice interfaces particularly benefit from on-device processing since users immediately perceive latency. The founders envision on-device-first computing becoming the default approach, with cloud services acting as optional fallback infrastructure rather than the primary processing layer.

March 6, 2026

Why the Next Wave of AI Will Run on Your Phone

In this CEOWORLD Magazine feature, RunAnywhere founders Sanchit Monga and Shubham Malhotra make the case for why AI's future lives on smartphones and edge devices — not in the cloud. As they explain, "Models are getting smaller and faster, but deployment is still broken. That's the layer we're focused on fixing." The article explores how current AI deployment faces significant friction from high latency, unreliable connectivity, privacy concerns, and escalating cloud compute expenses that limit practical AI applications in real-world products. RunAnywhere's two-part system — an SDK for cross-platform integration and a control plane for managing model versions, rollout policies, observability, and over-the-air updates — allows companies to ship AI updates without app store delays. The platform intelligently routes between on-device and cloud execution based on need. Sanchit's mobile development background at Intuit and Shubham's infrastructure experience at Microsoft Azure and AWS inform a deeply practical approach to distributed systems and production reliability. The article highlights voice AI as the "killer app" for edge computing, with on-device wake word detection, low-latency speech recognition, and offline language model capabilities. As Shubham puts it, "On-device AI delivers all three: speed, privacy, and reliability." RunAnywhere operates as a SaaS platform with open-source components driving adoption and enterprise features generating revenue — guided by their core philosophy that "constraints are not blockers, they are design inputs."

March 6, 2026

RunAnywhere: The Infrastructure Powering the Edge AI Era

The International Business Times profiles RunAnywhere's production-ready SDK that enables enterprises to execute AI models directly on edge devices — smartphones, IoT hardware, and existing CPUs/GPUs — rather than routing requests through costly cloud APIs. The article highlights a striking economic reality: only a small percentage of the global population actively uses AI tools today, and cloud-based inference costs of $0.30 to $0.50 per minute for voice AI and vision-language models make scaling to mass adoption prohibitively expensive. RunAnywhere's approach packages foundational frameworks like TensorFlow Lite and Meta's ExecuTorch into enterprise-ready infrastructure, reducing deployment timelines from months of custom engineering to days. The platform solves the fragmentation problem across iOS, Android, and embedded systems, standardizing AI performance across devices — including older hardware typically unsupported by major vendors. This is critical for reaching users beyond the latest flagship phones. Early customers span fintech and gaming enterprises, with growing demand from healthcare, aviation, insurance, and banking sectors where regulatory requirements or connectivity constraints make local inference essential rather than optional. The company's founding principle — "Intelligence should be as accessible and affordable as water" — drives their vision of leveraging billions of devices' idle computing capacity for distributed AI inference at a fraction of cloud costs.

March 2, 2026

Why India's AI Future Will Be Built on the Edge

DNA India explores how India is uniquely positioned to lead in distributed AI infrastructure by leveraging its billion-plus smartphone ecosystem as edge compute nodes, bypassing the need for expensive centralized cloud systems. The article highlights three competitive advantages: massive scale with over one billion devices, deep technical talent, and a pragmatic approach to cost-efficient AI deployment. With cloud-based multimodal and voice APIs costing $0.30 to $0.50 per minute, expanding AI access to hundreds of millions of Indian users through cloud infrastructure alone is economically unfeasible. RunAnywhere's unified, production-ready SDK bridges the gap between basic tooling and production deployment, enabling AI models to run across smartphones, CPUs, GPUs, and IoT devices. The platform reduces integration time from months to days, which is especially critical in India's fragmented device ecosystem that includes everything from premium smartphones to years-old mid-range Android devices still in active use. As co-founders Sanchit Monga and Shubham Malhotra emphasize, this approach "changes the economics of intelligence." The article argues that edge AI aligns perfectly with India's broader technology sovereignty objectives — enabling regional language optimization, sector-specific applications for fintech, healthcare, and telecom, reduced dependency on foreign cloud providers, and improved performance in low-connectivity zones. RunAnywhere's support for older, typically unsupported platforms means inclusive innovation without requiring users to upgrade their devices.

February 16, 2026

RunAnywhere SDK v0.17.5: Cross-Platform On-Device AI

RunAnywhere SDK v0.17.5 marks a major milestone in cross-platform on-device AI, unifying LLM inference and voice AI capabilities across Swift, Kotlin, Flutter, and React Native. This release enables developers to build privacy-first, offline-capable AI applications with a single integration that works seamlessly across iOS and Android devices. The SDK handles the complex operational challenges of on-device deployment — model distribution, memory optimization, runtime compatibility, and hardware acceleration — so developers can focus on building great AI experiences rather than wrestling with platform-specific implementation details. With built-in support for over-the-air model updates and intelligent cloud fallback, v0.17.5 represents the most production-ready on-device AI toolkit available today.

February 16, 2026

RunAnywhere Launches from Y Combinator as On-Device AI Platform for Running Models Locally on iOS & Android

RunAnywhere (YC W26) officially launches as a platform and SDK for running AI models locally on iOS, Android, and edge devices. The platform enables developers to deploy on-device AI with minimal setup — handling model packaging, device-specific optimization, and runtime management out of the box. With intelligent routing between on-device and cloud processing, apps maintain high performance regardless of connectivity. Backed by Y Combinator as part of its Winter 2026 batch, RunAnywhere addresses the growing demand for privacy-preserving, low-latency AI that works offline. The launch represents months of development focused on making on-device AI deployment as simple as a cloud API call, while delivering the speed, privacy, and reliability advantages that only local computation can provide.

January 22, 2026

RunAnywhere (YC W26) helps apps run AI directly on phones and other edge devices

Y Combinator highlights RunAnywhere as part of its Winter 2026 batch, showcasing the platform's ability to help apps run AI directly on phones and other edge devices. The post emphasizes RunAnywhere's streamlined approach to on-device AI deployment — developers can integrate the SDK with minimal setup and immediately begin executing chat and voice processing locally on user devices. The platform maintains cloud fallback capability for demanding tasks while keeping the majority of AI inference on-device, delivering the speed and privacy benefits that users increasingly expect. Y Combinator's endorsement signals strong investor confidence in the on-device AI infrastructure space and RunAnywhere's position as a leading platform in this rapidly growing category.

January 16, 2026

From Small-Town Punjab to YC: How Sanchit & Shubham Are Building RunAnywhere to Put AI on Every Device

FoundersBrief profiles the journey of Sanchit Monga and Shubham Malhotra — from small-town Punjab to Y Combinator — and their mission to democratize AI by putting it directly on every device. The founders recognized that while powerful AI models were becoming smaller and more efficient, the tools for deploying them to real devices remained fragmented, complex, and inaccessible to most developers. RunAnywhere was born from the conviction that on-device AI shouldn't require months of specialized engineering. The article explores how RunAnywhere consolidates fragmented on-device AI tools into a single, unified open platform that enables fast, private AI to run on everything from flagship smartphones and laptops to affordable $20 edge devices. By abstracting away the complexity of model optimization, hardware compatibility, and deployment logistics, the platform makes sophisticated AI capabilities universally accessible — not just to tech giants with dedicated ML infrastructure teams. Sanchit's experience building mobile products at Intuit and Shubham's deep infrastructure background at Microsoft Azure and AWS gave them a unique perspective on why AI deployment breaks down at scale. Their combined expertise in mobile development and distributed systems positions RunAnywhere to solve the full stack of on-device AI challenges, from initial SDK integration through production monitoring and over-the-air updates.